OpenBookQA, inspired by open-book exams to assess human understanding of a subject.

Based on CNN articles from the DeepMind Q&A database, we have prepared a Reading Comprehension dataset of 120,000 pairs of questions and answers. The objective of the NewsQA dataset is to help the research community build algorithms capable of answering questions that require human-scale understanding and reasoning skills. In addition, we have included 16,000 examples where the answers (to the same questions) are provided by 5 different annotators, useful for evaluating the performance of the QA systems learned. NQ is a large corpus, consisting of 300,000 questions of natural origin, as well as human-annotated answers from Wikipedia pages, for use in training in quality assurance systems. Natural Questions (NQ), a new large-scale corpus for training and evaluating open-ended question answering systems, and the first to replicate the end-to-end process in which people find answers to questions. There are two modes of understanding this dataset: (1) reading comprehension on summaries and (2) reading comprehension on whole books/scripts. This dataset contains approximately 45,000 pairs of free text question-and-answer pairs. This dataset involves reasoning about reading whole books or movie scripts. NarrativeQA is a data set constructed to encourage deeper understanding of language. The data set consists of 113,000 Wikipedia-based QA pairs. HotpotQA is a set of question response data that includes natural multi-skip questions, with a strong emphasis on supporting facts to allow for more explicit question answering systems. It contains over 300K questions, 1.4M obvious documents and corresponding human-generated answers. These operations require a much more complete understanding of paragraph content than was required for previous data sets.ĭuReader 2.0 is a large-scale, open-domain Chinese data set for reading comprehension (RK) and question answering (QA). The CoQA contains 127,000 questions with answers, obtained from 8,000 conversations involving text passages from seven different domains.ĭROP is a 96-question repository, created by the opposing party, in which a system must resolve references in a question, perhaps to multiple input positions, and perform discrete operations on them (such as adding, counting or sorting). The data set is provided in two main training/validation/test sets: "random assignment", which is the main evaluation assignment, and "question token assignment".ĬoQA is a large-scale data set for the construction of conversational question answering systems. It contains 12,102 questions with one correct answer and four distracting answers. Each example includes the natural question and its QDMR representation.ĬommonsenseQA is a set of multiple-choice question answer data that requires different types of common sense knowledge to predict the correct answers. It consists of 83,978 natural language questions, annotated with a new meaning representation, the Question Decomposition Meaning Representation (QDMR).

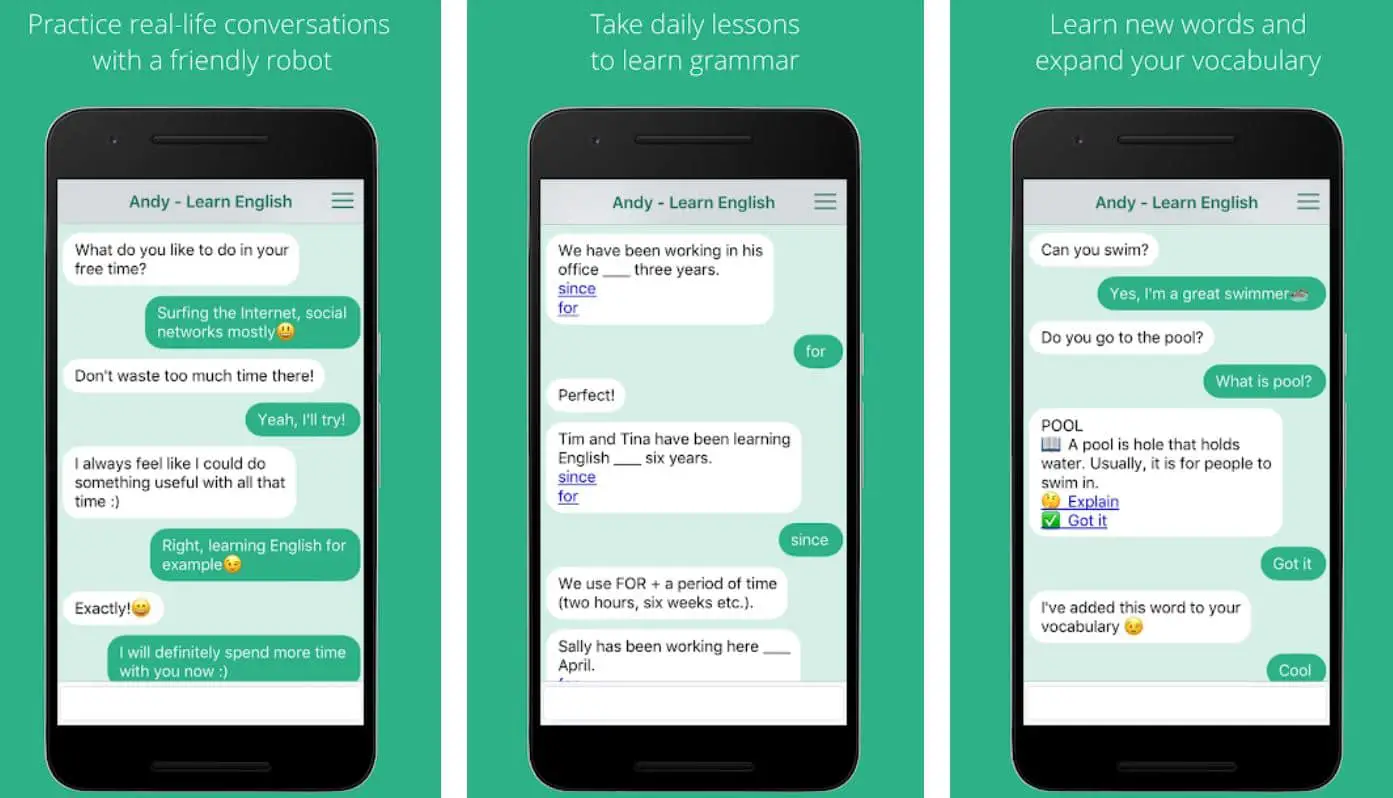

The data set covers 14,042 open-ended QI-open questions.īreak is a set of data for understanding issues, aimed at training models to reason about complex issues. Question-Answer Datasets for Chatbot TrainingĪmbigQA is a new open-domain question answering task that consists of predicting a set of question and answer pairs, where each plausible answer is associated with a disambiguated rewriting of the original question. We have drawn up the final list of the best conversational data sets to form a chatbot, broken down into question-answer data, customer support data, dialog data, and multilingual data. Chatbots are only as good as the training they are given. However, the main obstacle to the development of a chatbot is obtaining realistic and task-oriented dialog data to train these machine learning-based systems. A chatbot needs data for two main reasons: to know what people are saying to it, and to know what to say back.Īn effective chatbot requires a massive amount of training data in order to quickly resolve user requests without human intervention.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed